If you’ve ever used AI to create software in the last few months, you’ve felt this. You scaffold a service with an AI coding assistant in an afternoon. It doesn’t matter if it’s Anthropic Claude Code, or OpenAI Codex, or Cursor connected to your favorite MCP, Vercel v0, Replit, or anything else. Clean interfaces, sensible abstractions, edge cases handled, tests passing. It looks good. It reads well. Maybe even a senior engineer friend or coworker glances at it and nods, and then it ships. Three days later you’re in a 2am incident channel watching a latency spike that no amount of code review would have predicted, tracing through a cascade that your design never considered because your design assumed the happy path or just that AI created theoretically correct code that didn’t work perfectly in the real world.

That gap between correct in design and correct under load is where software goes to get humbled. And it’s a gap that’s getting wider, not narrower.

Here’s what’s actually happening right now: AI is genuinely good at the part of engineering that lives in the abstract. Given a well-scoped problem, it can reason about data flows, architect clean systems, anticipate documented failure modes, and produce code that is theoretically correct. Especially if you’re good at defining the requirements and feeding them into the prompt, it’s pretty magical (at times) what AI can output (and at times, straight crap, but this should improve). This part of the job is changing fast, and anyone who isn’t using these tools is leaving real velocity on the floor. Might be fine at a F500 company, but for the rest of us, our biggest enemy is time.

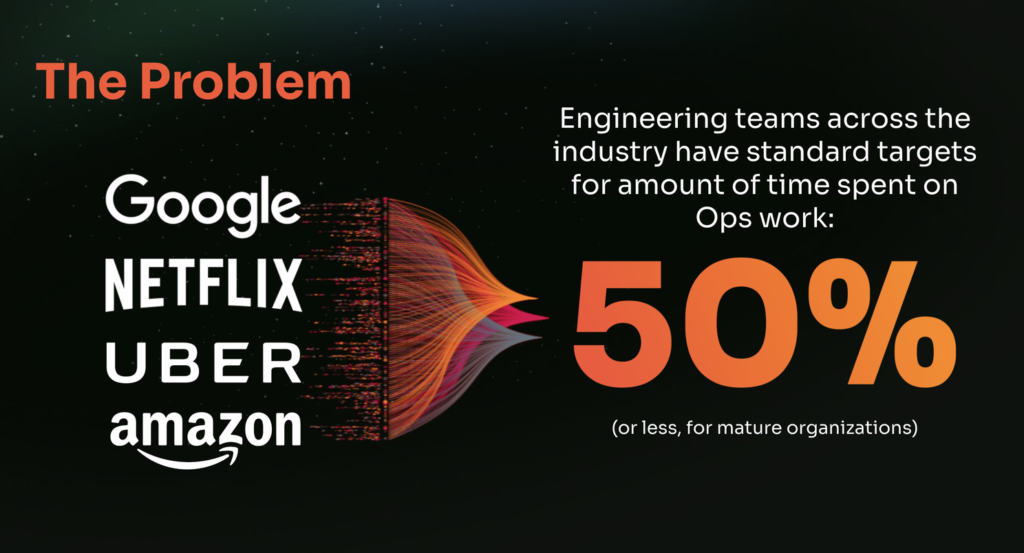

If your team is moving fast and there’s a lot of chaos, your percentage might even be higher.

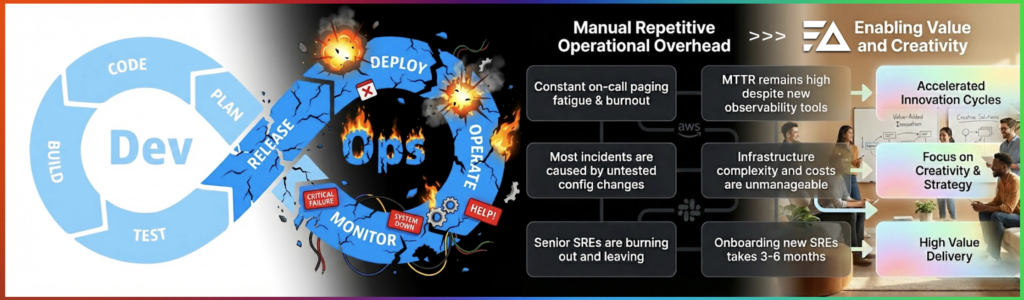

Companies like Google Netflix Uber and Amazon have well documented goals across not just SRE teams, but across all of engineering as these lines are getting more and more blurred especially among startups. AI is accelerating the creation and ops is currently limiting top speed.

As you try to move fast, AI models an idealized version of your system. It doesn’t know about your traffic spikes at 4am PT on a Monday. It doesn’t know about the upstream service that gets flaky whenever it rains inside us-east-1 (or another datacenter literally gets bombed, we do unfortunately live in this reality). It can’t model the decade of accumulated config drift, the cache invalidation strategy that made sense in 2019, or the way your users behave in ways your product spec never anticipated. The AI has never been paged. It has never watched a metric go sideways in real time and felt that specific, clarifying panic. It reasons about systems the way textbooks reason about systems… cleanly, without the scar tissue.

You as the engineer have the scar tissue. That’s not a liability. Right now, it’s one of the most valuable things you bring to the table.

Here’s where the opportunity lives, and it’s genuinely large: the prototype to production feedback loop.

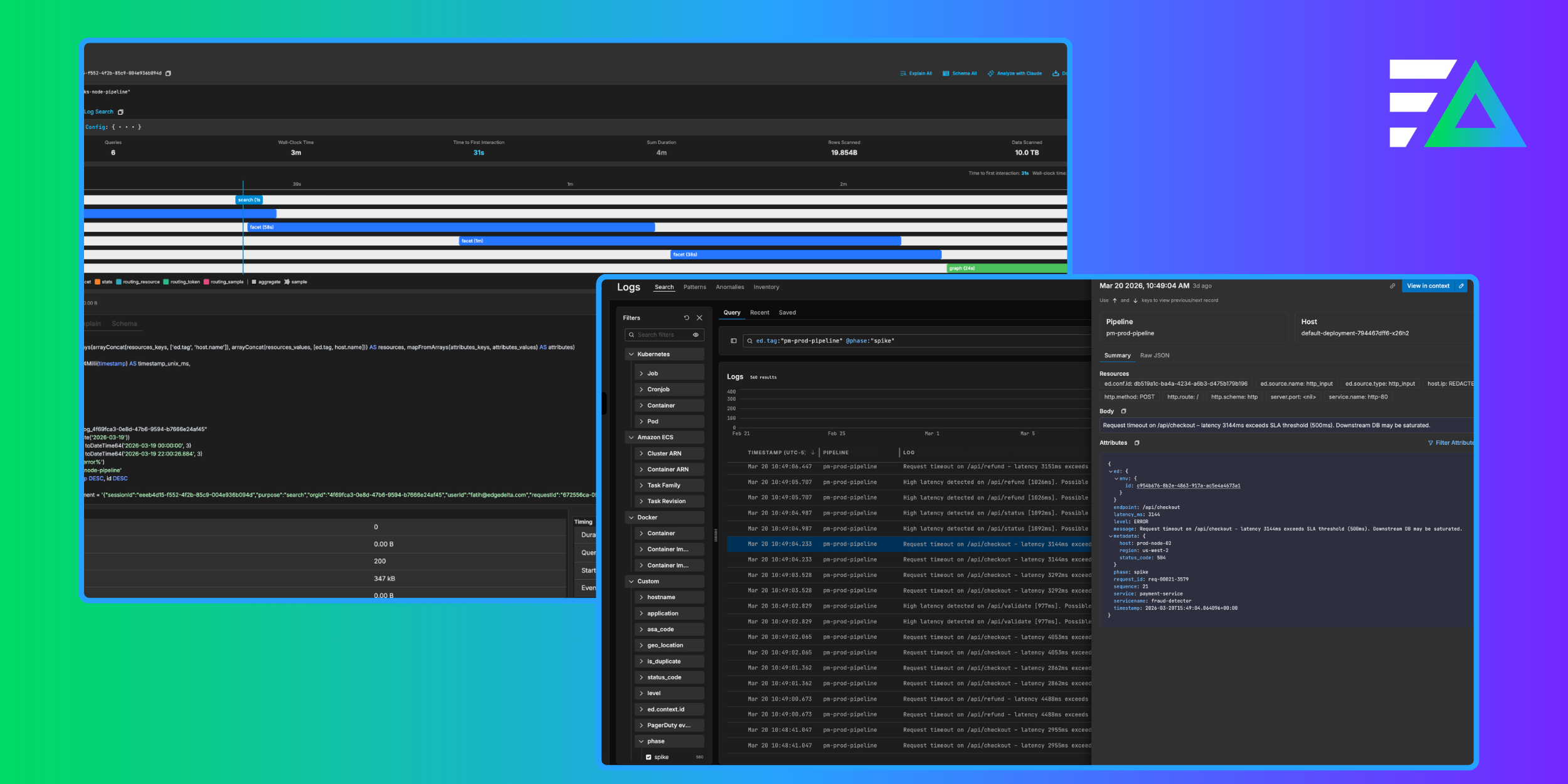

The teams that are struggling right now share a common pattern. They build fast (often with AI assistance) they ship, and then they instrument. Observability is a post launch concern. They add dashboards after something breaks. They write queries after customers notice. They build alerts in response to incidents rather than in anticipation of them.

That’s a backwards workflow in any era but in the AI era, it’s basically chaos that compounds over time. When you’re shipping code faster than ever (this particular acceleration is not reversing, Pandora’s box has been opened) then your feedback loop from production signal back to development iteration needs to match that pace. You cannot bolt observability on after deployment. By then, you’re already behind.

It’s either more devs doing ops, or more ops people and tools, but this inverse relationship is and issue.

The engineers who are pulling ahead right now are treating observability as a first class citizen inside the development loop itself. Before code ships, they’ve defined what “working” looks like in production terms: what metrics matter, what thresholds signal degradation, what traces will tell them the thing they most need to know when something goes wrong at 2am. They’re thinking about instrumentation the same way they think about interfaces… as a core design decision, not an afterthought.

We are shortening the cycle between “I think this will work” and “here’s what actually happens.” That cycle is now the primary constraint on how fast a team can improve a system. The code itself has never been cheaper in our history. The learning loop is very expensive when observability is disjointed. When do AI dev tools start to incorporate Datadog, Splunk, Elastic, Dynatrace, Grafana Labs, or Edge Delta style functionality by default?

Practically, this means a few shifts in how you work:

1- Instrument before you need it. When you’re generating code fast, the temptation is to stay in generation mode. Resist it. The instrumentation you skip today is the context you’ll desperately want at 2am next week.

2- Define your production contract explicitly. For every service, know the three metrics that tell you whether it’s healthy (and you can actually ask AI for this, prompt it to look at your source and try to decipher what metrics it should track, it’s actually pretty good at this exercise). You don’t need twenty metrics, three should suffice for this loop. If you can’t name them before you ship, you don’t understand the service well enough yet or you just haven’t asked AI to help you.

3- Treat production signals as the ground truth for development decisions. Your staging environment is a hypothesis. Production is the answer. Build the tooling that makes that answer legible, fast, and actionable.

I was on-site talking to a tech leader this week, and I mentioned by default keeping the human in the loop for these decisions. The reply I got was surprising: “But why? Humans are the weakest part of the system.” His forward thinking and perspective definitely made me smile.

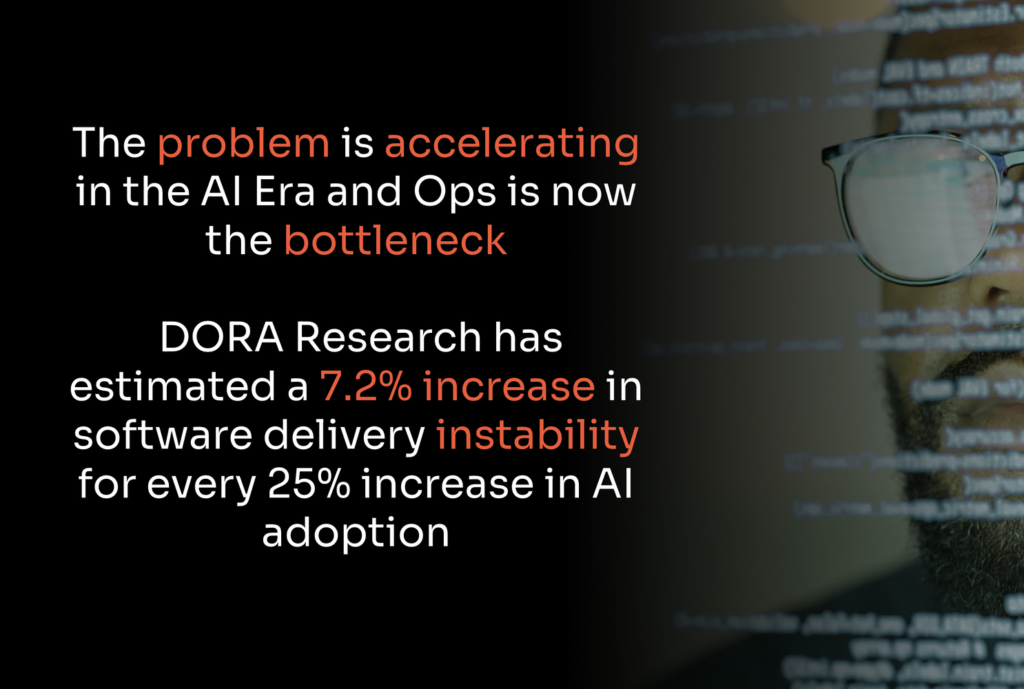

AI will keep getting better at designing software. The quality of AI-generated code will improve, the scope of problems it can handle will expand, and the velocity gains will compound. That’s not really the bottleneck anymore.

The bottleneck is ops. We need ops teams now more than ever. How quickly you can close the loop between what your system does in the real world and what you thought it would do… this defines your terminal velocity.

The engineering teams who treat observability as and input to the design rather than overhead, who build the feedback loop as a core part of the system, where every incident becomes an insight, every signal tightens the architecture. The gap between what is designed and what production does gets narrower over time.

We want these AI machines to keep the systems running so humans can keep the innovations coming.

The engineering teams who treat observability as and input to the design rather than overhead, who build the feedback loop as a core part of the system, where every incident becomes an insight, every signal tightens the architecture, where the gap between what is designed and what production does gets narrower over time… these teams win in this era.

Tighten that loop. Everything else follows.