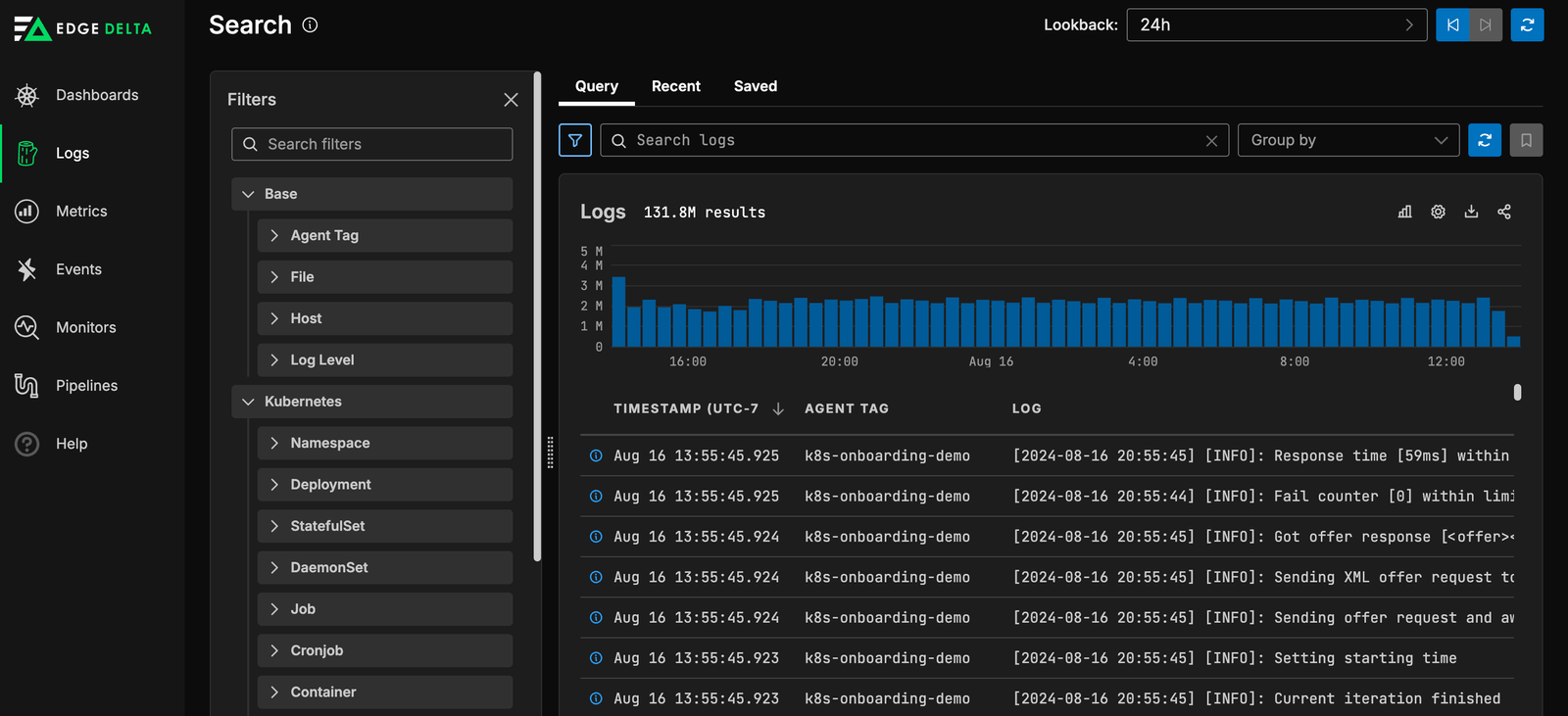

Unified logging for AI teammates

Collect, pre-process, and route all your logs through a single unified pipeline, giving every AI Teammate the high-quality signal they need to triage and resolve incidents.

Fragmented logging architectures limit what AI can do

Teams use several tools to stay on top of data growth and meet the needs of different users. As a byproduct, logging architectures have become sprawling and difficult to scale. It’s not uncommon for teams to use a combination of:

Open-Source Agents

Lack performance and offer no fleet management capabilities.

Platform-Specific Agents

Create vendor lock-in issues and hinder flexibility.

Data pipelines

Homegrown versions filter out data in anticipation of what you’ll need.

One architecture, every AI teammate

Edge Delta consolidates log collection, pre-processing, and routing into a single agent, giving every AI Teammate a clean, unified signal across your entire environment. No more fragmented pipelines producing inconsistent data.

Flexible Data Collection

AI Teammates pull from any data source across your environment and route the same signal to multiple destinations, so no Teammate ever works from an incomplete picture.

Petabyte-Scale Reliability

AI Teammates work against petabytes of log data in real time, backed by infrastructure built to never drop events or fall behind under load.

Centralized Agent Management

Deploy and manage agents fleet-wide from a single SaaS backend, so every AI Teammate always has access to a healthy, up-to-date data feed.

Ready to take the next step?

Learn more about our use cases and how we fit into your observability stack.

Data Pipelines for Every Teammate

Gain Visibility Into All Log Data

Index Any Level of Fidelity

Transform Datasets

“This is not just about doing what you used to do in the past, and doing it a little bit better. This is a new way to see this world of how we collect and manage our observability pipelines.”

Ben Kus, CTO, Box

Join Engineering Teams That Are Embracing AI

It only takes a couple of minutes to start running AI Teammates in production.