Intelligent

Telemetry Pipelines

The intelligent foundation that gives you control and flexibility over your logs, metrics, traces, and events. Improve efficiency and MTTR with AI recommendations, enrichments, and optimizations out-of-the-box. Forward your observability and security data from any source to any destination with ease.

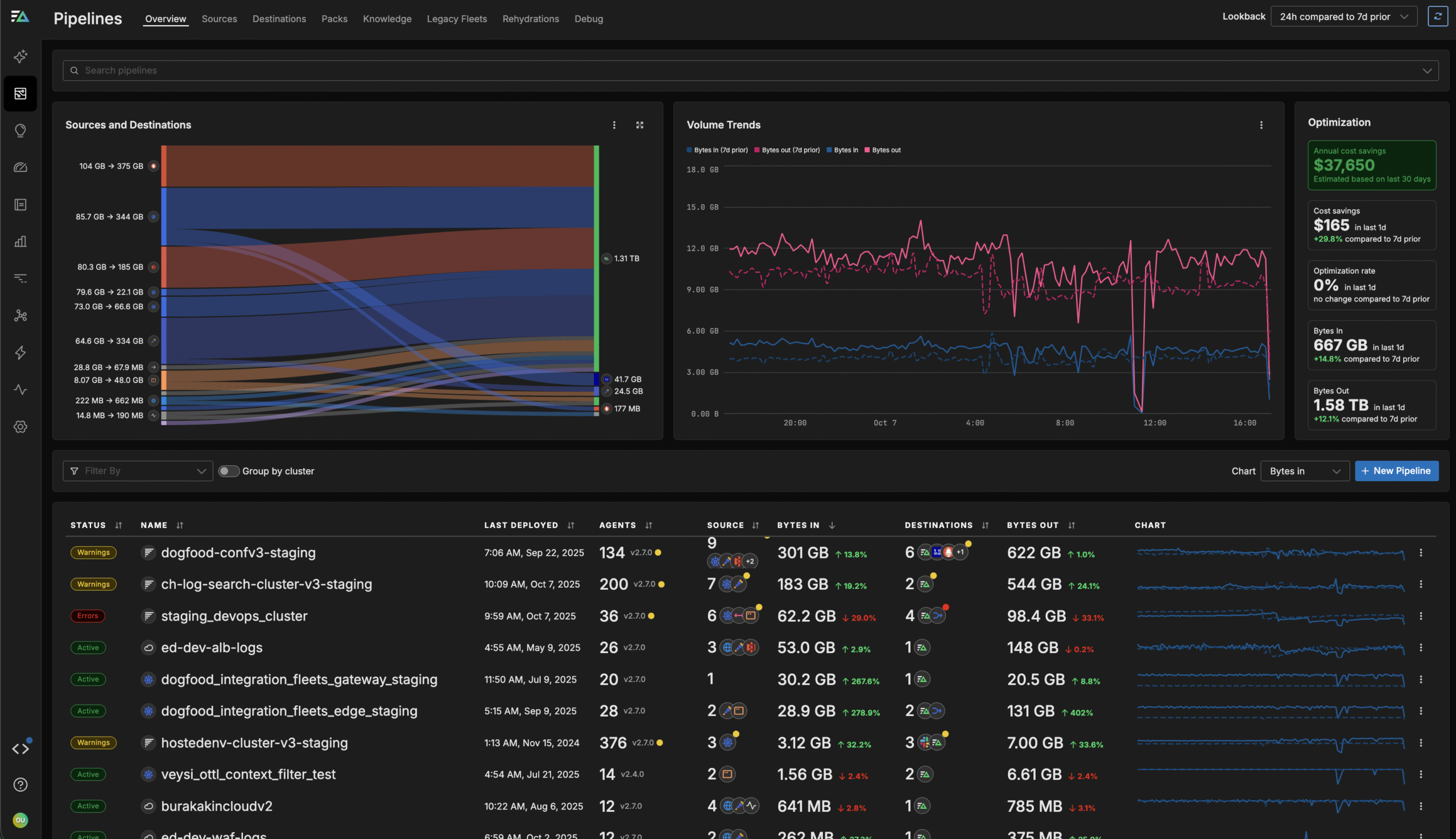

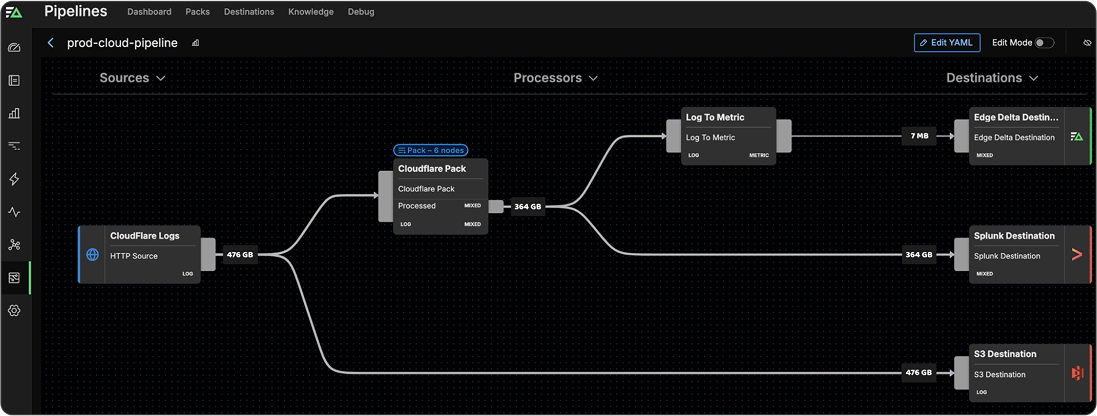

Pipelines Give You Full Control Over Your Data Pre-Index

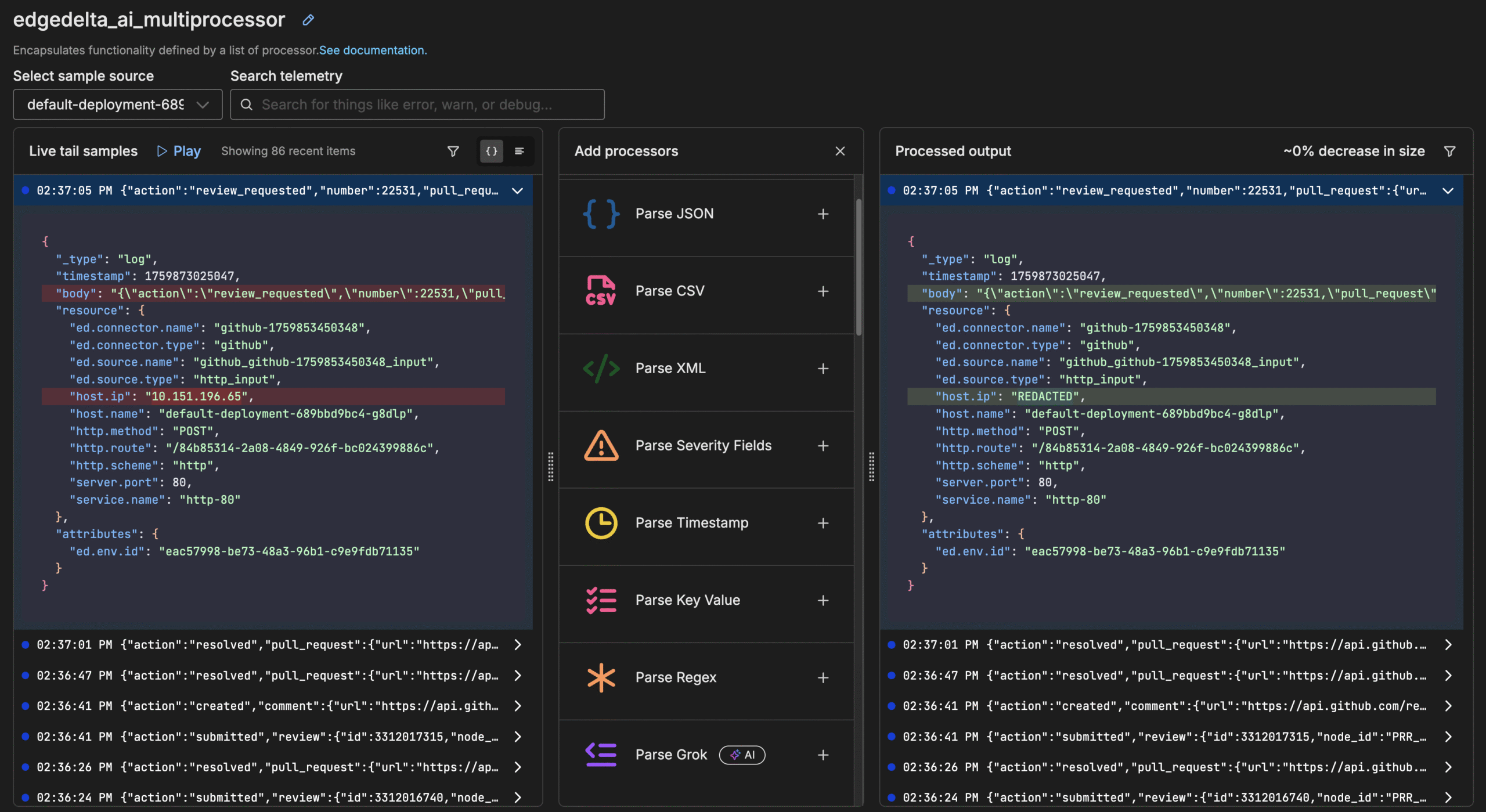

Automate Insights

Use intelligent insights and recommendations to automatically enrich, filter, transform, optimize, and surface patterns in your data in real time.

Route Flexibly

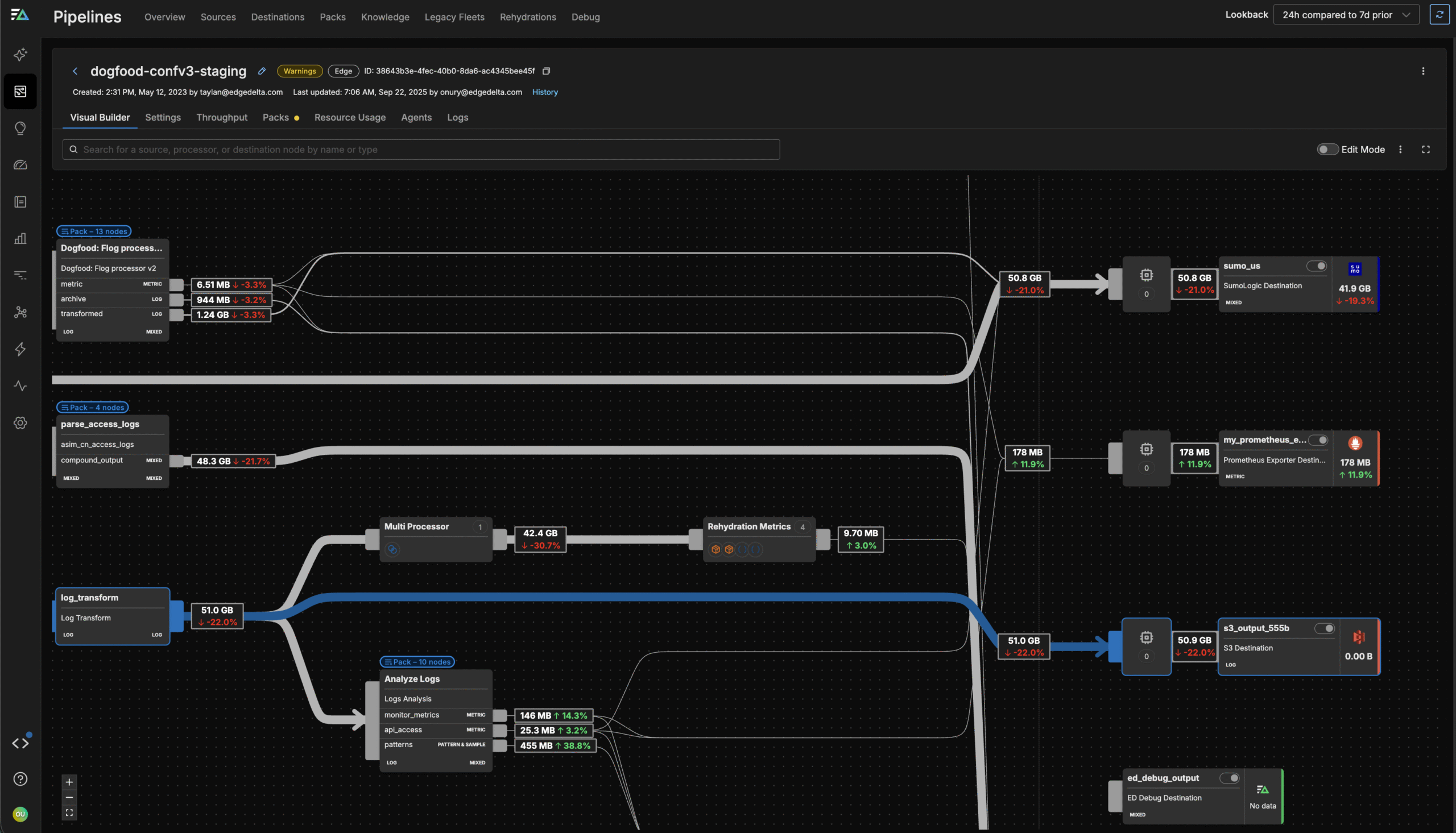

Aggregate, enrich, transform, and route your data from any source to any destination, in any format, including OTel and OCSF.

Optimize Costs

Utilize flexible pipelines with auto-discovery to build, test, manage, and control your data ingest and costs at scale.

Build and Manage Pipelines with Ease

Build via Chat

Construct and fine-tune pipelines in seconds by chatting with OnCall AI, the autonomous leader of your agentic AI Team.

Edit in an Intuitive UI

Use the graphical editor to build pipelines yourself or refine those created by OnCall AI. Minimize build time with pre-built packs and preview transformations to ensure safe deployment.

Stay Secure

Enforce granular role-based access control (RBAC) so you can expose only the necessary portions of the pipeline to the appropriate teams.

Gain Control of Your Telemetry Data Today

See why leading engineering teams rely on Edge Delta to manage their observability and security data. You'll be up and running in a matter of minutes.

Pipelines Let Engineers All Around the World Simplify Their Data Architecture

“This is not just about doing what you used to do in the past, and doing it a little bit better. This is a new way to see this world of how we create and manage our pipelines.”

Ben Kus, CTO, Box

Route Data from Any Source to Any Destination

Edge Delta supports 50+ integrations across analytics, alerting, storage, and more. We can help you consolidate agents and routing infrastructure, and avoid vendor lock-in.

Industry Adoption of Telemetry Pipelines

Over 48% of Fortune 100

companies are actively utilizing Telemetry Pipelines for their data.

Over 27% of Fortune 500

companies are using some form of Telemetry Pipelines to observe data.

The compounded effects of not utilizing a telemetry pipeline lead to increased costs, reduced service quality, and competitive disadvantage.

If you’re not using data pipeline management for security and IT, you need to.

By 2026, 40% of log telemetry will be processed through a telemetry pipeline product, an increase from less than 10% in 2022.

Working with Companies Around the World

Get Up and Running in Minutes

Edge Delta's end-to-end Telemetry Pipelines work out of the box. Get set up in minutes and gain full control over your telemetry data today.