The math is untenable. The cybersecurity industry faces 4.8 million unfilled positions globally in 2025, while security teams are asked to handle cloud migrations, compliance expansions, supply chain vulnerabilities, AI governance, and traditional security operations — all with headcount that hasn’t budged.

But here’s what’s starting to work: organizations that treat AI agents as force multipliers rather than headcount replacements are actually closing the capability gap. This isn’t happening by magic, but through systematic augmentation of what their existing teams can accomplish.

The Scope Creep Crisis: What Modern Security Teams Actually Do

Most security teams don’t resemble the 24/7 SOCs you see in vendor demos. The typical setup seen in most companies is two to five people juggling fifteen different responsibilities. Security analyst job descriptions now routinely include: incident response, compliance reporting, vendor risk assessments, security awareness training, vulnerability management, and “other duties as assigned.” That’s not a job — that’s an entire department compressed into one human.

Here’s what a typical day looks like for most security teams:

7:00 AM: Check overnight alerts (87 of them, 85 are false positives)

8:30 AM: Emergency meeting about a vendor questionnaire due tomorrow

10:00 AM: Start investigating that anomaly from three days ago

11:00 AM: Drop everything for a “quick” compliance evidence request

2:00 PM: Back to the anomaly investigation (where was I?)

3:30 PM: Board deck needs security metrics by EOD

5:00 PM: Finally getting to that critical patch from last week

7:00 PM: Still working on the board metrics

The cruel irony is that so much of this work is mechanical — gathering evidence, correlating logs, formatting reports. It’s work that must be done but doesn’t require the strategic thinking these professionals were hired to provide. We’re paying security experts to be data janitors.

Foundation First: Why Data Quality Determines Everything

A common failure pattern in security automation projects is the data quality death spiral: garbage data creates cascading problems. Bad log formatting triggers false positives. False positives create alert fatigue. Alert fatigue leads to missed threats. Teams end up turning off the automation entirely — not because the concept was wrong, but because the foundation wasn’t solid.

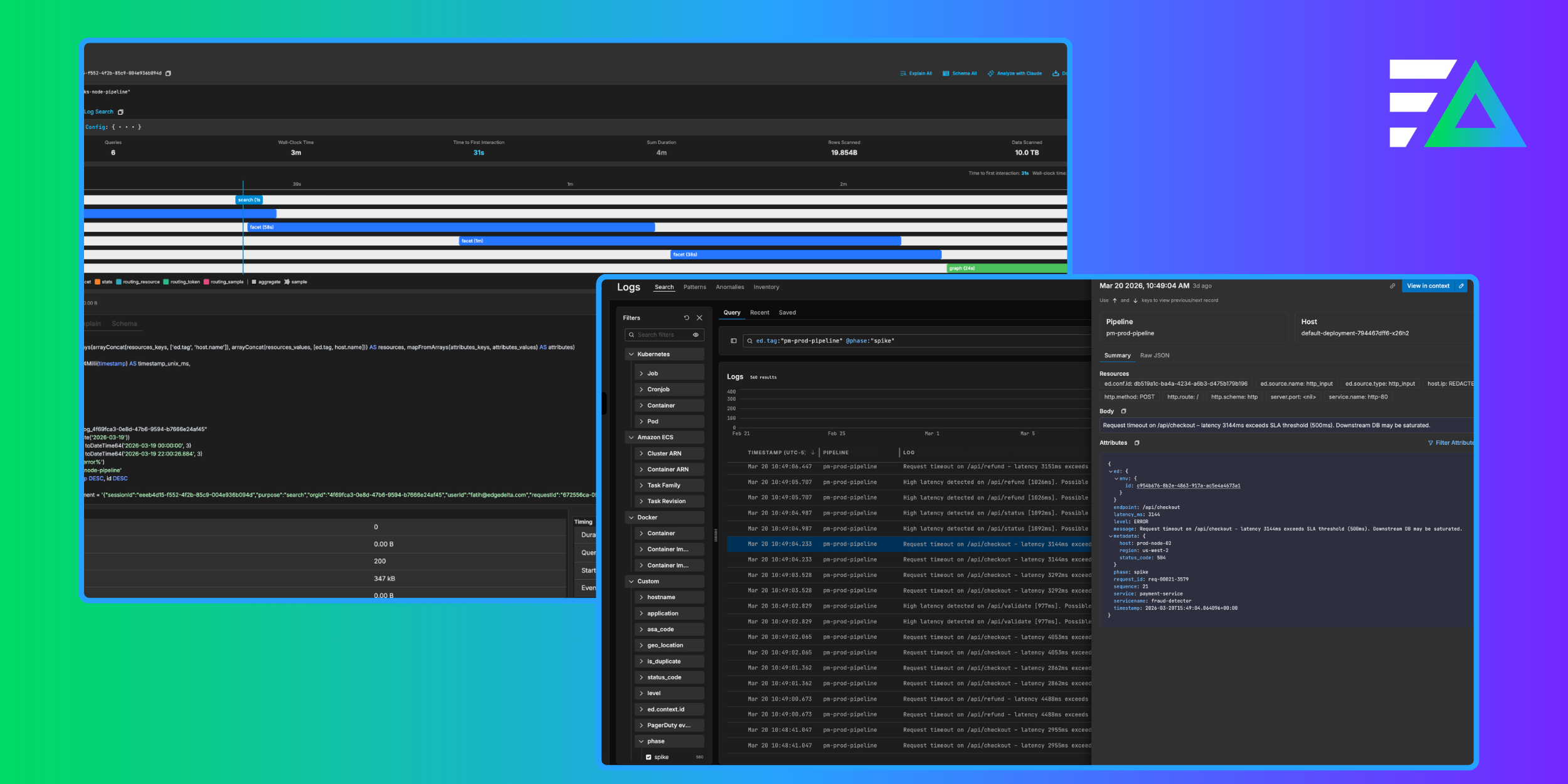

This is where AI agents offer their first real value — not in making decisions, but in cleaning up the mess before decisions need to be made. At Edge Delta, we’ve seen organizations cut their false positive rates by 78% just by implementing AI-driven data normalization at ingestion. That’s before any fancy threat detection even kicks in.

The practical wins are immediate:

Automatic classification: AI agents identify and categorize log sources without manual mapping. One financial services client went from spending 20 hours per week on log source management to zero.

PII detection at scale: Instead of discovering sensitive data in logs during an audit, agents identify and mask it at ingestion. No more scrambling to prove compliance after the fact.

Noise reduction that actually works: Not just simple deduplication, but contextual understanding of what matters. When the same error appears 10,000 times in an hour, the agent recognizes the pattern and consolidates it into a single, actionable alert.

Consistent formatting across chaos: Different systems speak different languages. Your firewall logs look nothing like your application logs. AI agents translate everything into a common schema without losing fidelity.

The Multiplication Effect: Where AI Agents Create Leverage

AI agents don’t just lay the groundwork for a strong security posture — they also amplify human efforts across security operations. By taking on the mechanical work that slows teams down, agents free humans to focus on higher-value tasks. The following areas illustrate where that multiplication effect becomes most evident.

Compliance Automation That Actually Helps

Compliance occupies a huge percentage of most security teams’ time. The gathering of evidence, updating of documentation, the checking and re-checking (not to mention the anxiety and fear around missing or forgetting something) take a big toll on these teams. With AI agents continuously monitoring and documenting control effectiveness, that same audit time and energy could be spent updating controls or threat hunting while AI did the mechanical work of documentation and prep.

The difference isn’t incremental — it’s exponential. When properly configured, agents can collect evidence continuously rather than in quarterly scrambles. They can be set up to check configurations and document drift in real-time rather than during annual reviews. With the right data retention and indexing, when an auditor asks about your encryption standards from six months ago, a well-implemented agent system can retrieve the exact configuration state from that moment, not just what you hope was true.

The organizations that implement continuous control monitoring typically see dramatic improvements in their audit performance — not because they suddenly become more secure, but because they can finally prove the security they already had.

Investigation Acceleration Without Shortcuts

The average security investigation involves correlating weeks of data across a multitude of tools, looking for patterns humans can barely perceive. An AI agent can process six months of logs across a dozen systems in minutes. But speed isn’t the real value — it’s the connections the agent finds that humans would miss.

Consider the kinds of patterns that are nearly impossible for humans to spot: API calls that happen days before an incident from completely different IP ranges, or subtle timing correlations across disparate systems. These connections exist in the data, but finding them manually requires either incredible luck or impossible patience. AI agents can systematically identify these patterns without the cognitive limitations that make human correlation difficult across large time spans and data sources.

This isn’t about replacing investigator intuition. It’s about giving investigators superpowers. The agent handles the mechanical work — timeline construction, indicator enrichment, pattern matching — so humans can focus on understanding adversary intent and crafting response strategies.

Proactive Security (The Stuff That Never Gets Done)

Every security team has a list titled “Important But Not Urgent” that never gets touched. Certificate monitoring. Permission reviews. Configuration drift detection. Supply chain assessment updates. These are the hygiene tasks that prevent incidents but always get bumped for whatever’s on fire today.

AI agents don’t have competing priorities. They can monitor certificate expiration while you’re handling an incident. They can review IAM permissions while you’re in a compliance meeting. They can track configuration changes while you’re asleep.

Certificate management is a perfect example of where this matters. Most organizations don’t actually know how many SSL certificates they have across their infrastructure until something expires and breaks. An AI agent can do systematic inventory and analysis — the kind of comprehensive review that teams perpetually postpone because something more urgent always comes up.

The Collaborative Model: Humans and Agents as Partners

There is a common misconception that AI is ready to take over just about everything, and it’s just not the reality. Humans are still necessary for so many functions — and when humans direct and collaborate with agentic AI, the results are far superior to what AI could do on its own and far more efficient than what the human could do on theirs.

What Agents Do Better

Agents excel at volume and consistency. They can process millions of events without fatigue, maintain perfect recall across unlimited time periods, and apply rules uniformly regardless of workload. They don’t get bored reviewing the 10,000th log entry or miss patterns because it’s Friday afternoon.

They’re also infinitely patient with repetitive tasks, such as checking every single configuration against policy, comparing every login against baseline behavior, and correlating every alert with threat intelligence. These tasks are mind-numbing for humans but trivial for agents.

What Humans Must Own

Strategic decisions require human judgment. When an agent identifies unusual behavior from a key executive’s account, it can’t determine if this is the CEO trying a new tool or an adversary establishing persistence. That requires understanding organizational context, politics, and risk appetite that no agent possesses.

Humans own stakeholder communication. Consider times when you’ve needed to explain to the board why you were recommending a $2M security investment, or convince developers to refactor authentication, or negotiate with a vendor about their security practices. These conversations require emotional intelligence and persuasion that remain uniquely human.

The Handoff Points

The most successful implementations establish clear boundaries. Agents gather and correlate data, then present findings with context. Humans review, decide, and direct response. Agents execute the mechanical steps of response. Humans verify and adjust.

Think of it like flying a modern aircraft. The autopilot handles routine navigation and stability. The pilot makes strategic decisions and handles exceptions. Both are essential, and neither is sufficient alone.

Implementation Without Disruption

Introducing AI agents into your security program doesn’t have to be a high-risk overhaul. With the right approach, teams can adopt automation gradually, validate each step, and build trust along the way.

Start Where It Hurts Most

Don’t try to automate everything at once. Pick your biggest time sink that requires the least human judgment. For most teams, this is log analysis and correlation. For others, it’s compliance evidence collection. Start there.

Measure everything before you begin. How long does investigation take today? How many false positives do you process? How many hours are spent on compliance prep? You can’t prove value without baselines.

The Crawl-Walk-Run Progression

Phase 1: Read-only analysis. Agents analyze and report but take no action. This builds confidence and reveals any issues with data quality or logic.

Phase 2: Supervised recommendations. Agents suggest actions but humans execute. This trains both the agents and the humans on what good looks like.

Phase 3: Autonomous action with approval gates. Agents can act on pre-approved scenarios but escalate anything unusual.

Phase 4: Full automation with exception handling. Agents handle routine work entirely, only involving humans for exceptions.

Most organizations take 6-12 months to progress through these phases. That’s fine. Better to move deliberately than to lose trust through premature automation.

Building Trust Through Transparency

Every action an agent takes must be auditable. Every decision must show its reasoning. This isn’t just for compliance — it’s for building confidence. When your team can see exactly why an agent flagged something as suspicious, they learn to trust its judgment.

Regular reviews are essential. Conduct weekly reviews of agent actions in Phase 1, and move to a monthly cadence by Phase 4. Look for patterns in what the agent gets wrong. These usually reveal either data quality issues or missing context that needs to be added to the system.

Metrics That Prove Force Multiplication

So many security teams’ metrics are focused on activity that demonstrates how hard they are working. How many alerts, how many incidents, how many investigations, how many closed tickets – these are all important activities to be sure, and they answer the question, “What does security do around here?” But they do not answer the more important question: “What value do they add?”

Moving Beyond Activity Metrics

“We processed 10,000 alerts this month” tells you nothing about value. “We reduced actual incidents by 30%” tells you much more. Focus on outcomes, not activity.

Here are some real metrics that matter:

- Mean time to detection: From event to identification (target: under 1 hour)

- Investigation efficiency: Time from alert to resolution (target: 4 hour reduction)

- False positive rate: Percentage of alerts that require no action (target: under 20%)

- Compliance readiness: Percentage of controls continuously validated (target: over 90%)

- Team utilization: Hours spent on strategic vs. mechanical work (target: 60/40 split)

The Real ROI Calculation

The financial case is straightforward. Take your current team size and multiply by what percentage of their time is spent on mechanical work. That’s your automation opportunity. For most organizations, it’s 60-70% of total security hours.

But the real value isn’t in the hours saved — it’s in what those hours enable. When your team spends less time correlating logs, they spend more time threat hunting. When they spend less time gathering compliance evidence, they spend more time improving security architecture.

Leading Indicators to Track

Watch for these early signs that force multiplication is working:

- Decreased overtime hours (your team is handling the same workload more efficiently)

- Increased proactive findings (they have time to look for problems, not just react)

- Improved audit results (continuous monitoring catches issues before auditors do)

- Higher team satisfaction scores (less drudgery, more interesting work)

The Compound Effect of AI Enhancement

Small improvements compound. A 20% reduction in false positives means 20% more time for real threats. That extra time finds more threats earlier. Earlier detection means less damage. Less damage means less recovery time. Less recovery time means more time for prevention.

Within 18 months, organizations that implement AI agents properly can see a complete transformation — not because the agents are magical, but because they free humans to do human work. I’m talking about strategic thinking, creative problem-solving, and relationship building. In other words, the things that actually move the security program forward.

The organizations getting this right aren’t the ones with the biggest security budgets or the most advanced tools. They’re the ones that understand a fundamental truth: in the modern threat landscape, trying to scale humans linearly against exponential growth in complexity is a losing game. The only sustainable path is multiplication — giving every team member the ability to operate at 10x their natural capacity.

This is exactly what we’re building at Edge Delta with our AI Teammates platform. These agents don’t replace your team — they multiply their capabilities. Because the choice isn’t between humans and AI. It’s between teams that multiply their force and teams that burn out trying to do the impossible.

The math only works one way.