Analyze Every Logline with Processors

When it comes to processing observability data, teams often still use a centralize-then-analyze approach. This means they are routing all or most of their observability data to a downstream destination before analyzing it. From the central platform, teams manually build monitors and customize their dashboards as they see fit.

As teams shift toward microservices, the volume of data that companies generate has become too large for traditional downstream platforms to handle. As a result, index locations are bogged down, and platform performance degrades. Teams either have to accept a more costly and slower approach to observability or simply drop some of their data – neither of which is an ideal option for today’s standards.

Everyone knows that these analytics are crucial for performing daily monitoring tasks. But teams that aren’t indexing all of their data lose visibility into discarded datasets. Teams need a way to process their data further upstream to gain visibility – regardless of whether your log data is indexed in its raw format.

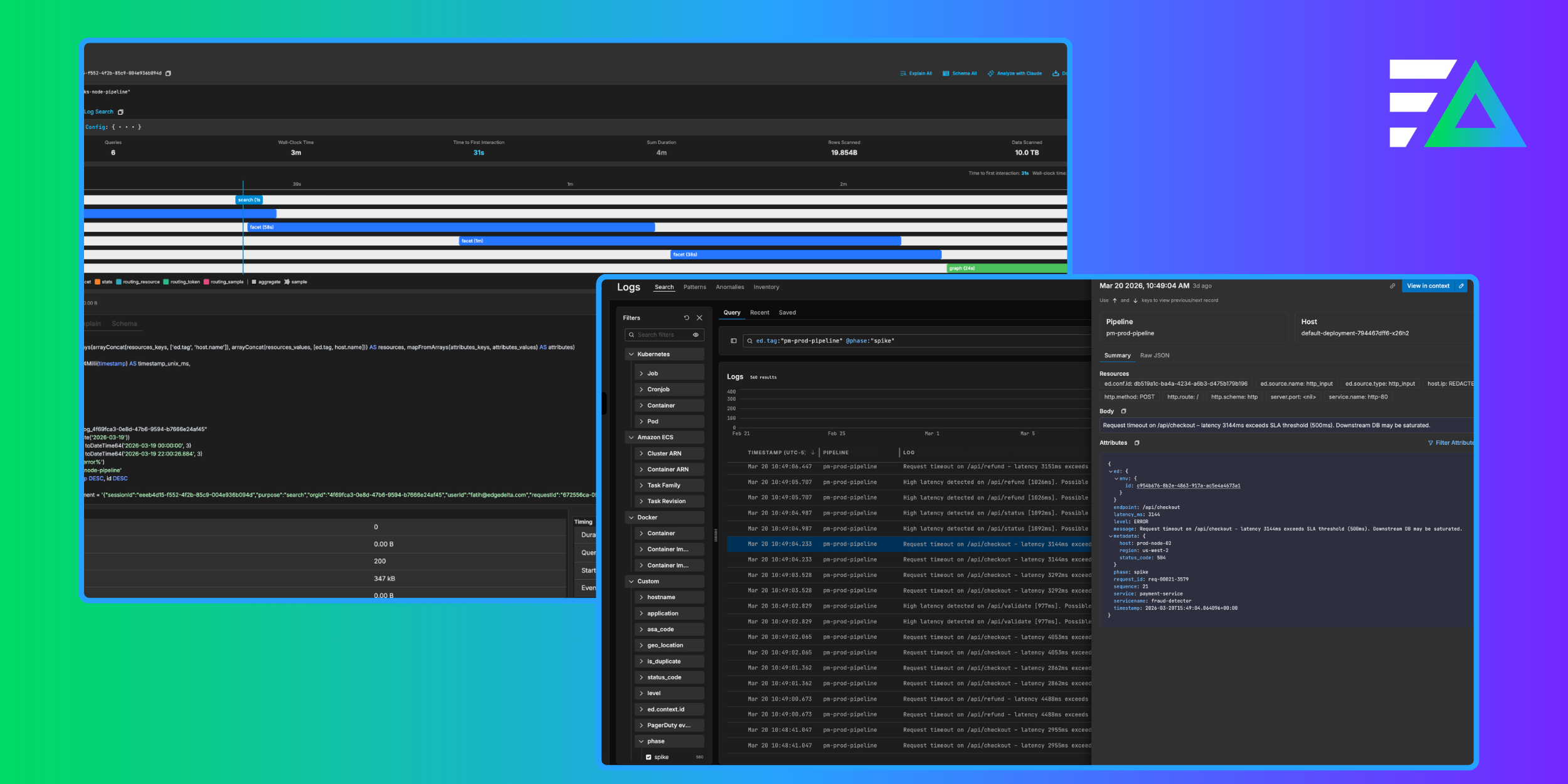

To solve this problem, you can derive analytics from your log data upstream using Edge Delta’s Analytics Processors in Visual Pipelines.

How Edge Delta Processors Works

Edge Delta’s Processors run at the agent level to process (analyze, transform, enrich, or otherwise manipulate) your data as it’s created at the source. For this article, we will explore Edge Delta’s Analytics Processors.

Because Processors run at the data source (or as close to the source as possible), we help teams change where data is processed and derive analytics from their data orders of magnitude faster. Additionally, since all data is processed and analyzed upstream, teams can have visibility into larger volumes of data (not just the data they choose to index).

To achieve this, Edge Delta uses several different Processors. In this blog we’re going to focus on two buckets: Log to Metric and Log to Pattern Processors.

Log to Metric Processor

The Log to Metric Processor extracts dimensions from log data to create time series metrics. These metrics can be visualized in our Metrics Explorer or downstream observability tools. Extracting these KPIs from your log data allows you to populate metrics dashboards without ingesting raw log data. As a result, you can dramatically reduce observability costs and gain real-time visibility into datasets you previously had to discard.

In addition to helping you visualize behavior over time, Edge Delta also uses these metrics to support machine learning-enabled anomaly detection. Our platform automatically baselines your metrics to establish what’s ‘normal’ for your monitoring KPIs. When a metric lands outside the expected threshold, teams receive automated alerts paired with the associated data needed for troubleshooting. Now you no longer have to wait for data to be ingested and processed downstream before you trigger an alert. Instead, you know immediately when something abnormal occurs, why it’s happening, and where it went wrong. With how much data companies generate daily, a more proactive approach is essential to ensure applications run smoothly.

{{newsletter}}

Log to Pattern Processor

The Log to Pattern Processor works by finding common patterns in logs and decoupling them. As a result, you can summarize a massive amount of log data into each unique behavior. Log Patterns can be consumed through the Edge Delta interface and downstream observability tooling.

The Log to Pattern Processor is helpful when troubleshooting issues because it effectively reduces the noise in your log data by clustering common loglines. This way, you can quickly understand each event instead of manually sifting through loglines. Once the data is clustered, you can quickly identify the highest or lowest volume events. Much like our Log to Metric processor, we apply machine learning-enabled anomaly detection to log patterns to identify abnormal spikes in negative sentiment data.

You can also use this processor to support observability pipelines, in which you route summaries of your log data downstream. For example, let’s say one of your data sources generates a significant volume of INFO logs that your team doesn’t leverage frequently. Instead of indexing that data in its raw format, you can send a log cluster. When you apply this strategy at scale, you can save significantly on your observability bill, while giving your team visibility into the log data.

Take a Proactive Approach to Observability Data

Edge Delta’s approach to observability offers a proactive and efficient solution to handle the increasing volume of data. By using Processors that analyze data at the agent level, teams can extract valuable metrics and troubleshoot issues faster without sacrificing visibility or incurring extra costs.