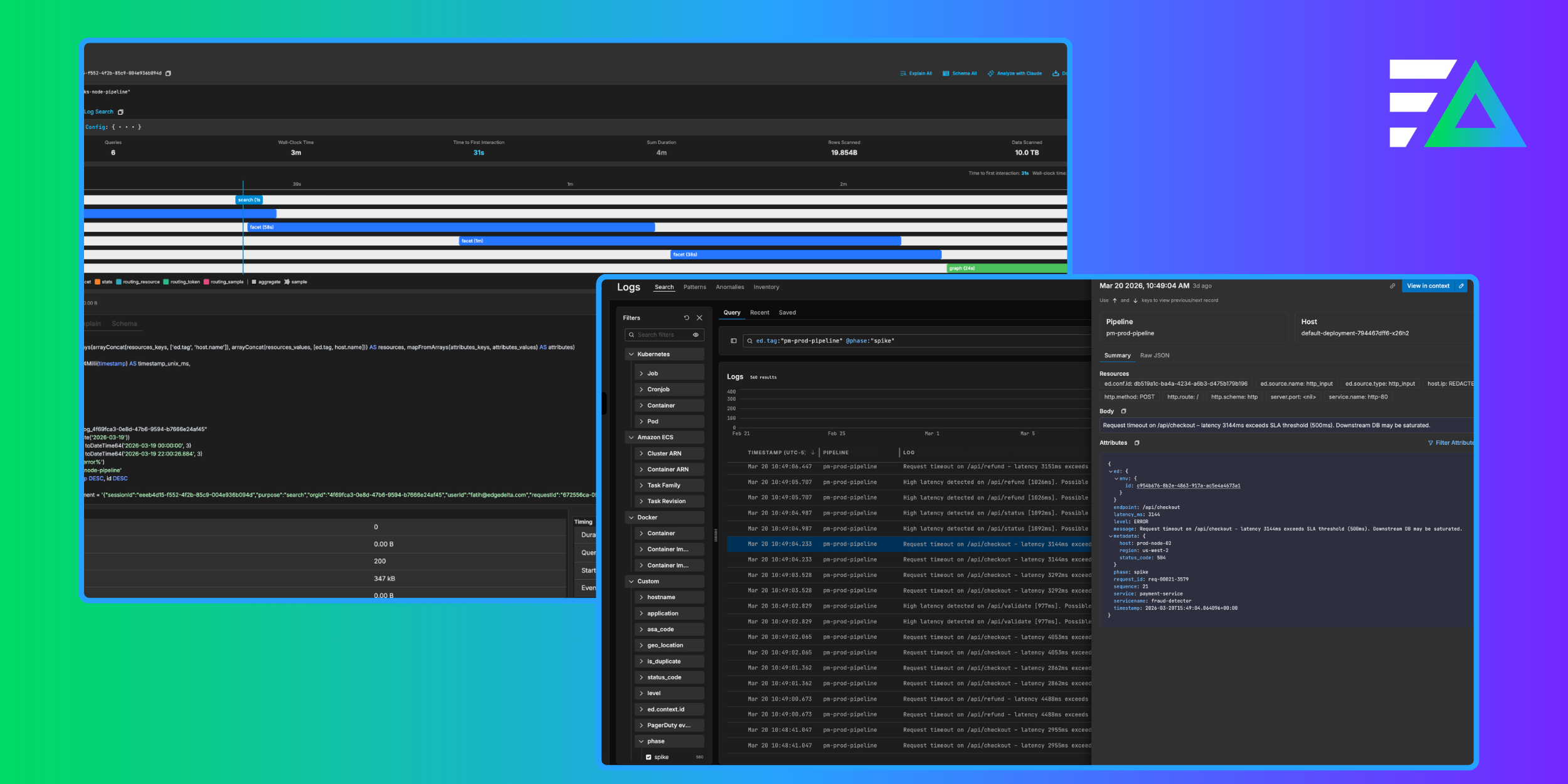

Today, we’re excited to announce Visual Pipelines. This release simplifies telemetry pipeline workflows, while also giving you more transparency and control.

Through a single, point-and-click interface, you can…

- Build pipelines, including the data source, data processing functions (“Processors”), and streaming destinations.

- Test and iterate upon pipelines without having to deploy (and redeploy).

- Monitor pipeline health and ensure every component is working as expected.

Visual Pipelines is available now within Edge Delta. Here’s a quick demo explaining how it works:

Why We Built Visual Pipelines

Historically, building and managing pipelines has been a convoluted process. Teams have to build disparate configuration files that reference one another across all their data sources. The nature of manually building verbose configs leads to complexity – even more so as you scale. Plus, there’s usually little-to-no support for validating pipeline configurations or monitoring them once in production.

As a result of this approach, we often see…

- “Tribal knowledge” trapped with a small number of experts, who end up owning pipeline administration.

- Difficulty onboarding new team members and scaling pipelines across the organization.

- No autonomy or self-service when it comes to building pipelines.

We aim to solve these challenges with Visual Pipelines. When you use Edge Delta, you can expect to see the following benefits.

Start a free trial

Simplify Pipelines Workflows

Our “North Star” with this release is simplicity and ease of use. We’ve delivered on this in two primary ways:

- By providing an experience that uses clicks and text forms, versus complex config files.

- By centralizing everything you need to build, manage, and monitor your pipelines into one interface (more on this in the sections that follow).

This approach eliminates the need for teams to rely on a few experts to build and manage pipelines. As a result, this will help teams scale pipelines across their different teams and data sources.

Transparency Pre-Deployment and In Production

Visual Pipelines dramatically improves the transparency of and control over pipeline workflows. As our Director of Product, Zach Quiring puts it, “anyone should be able to come in and digest what their pipeline is doing, from what datasets are involved to what manipulations are happening to where your data ultimately lands.” We focused on this area because we want to instill confidence that every component of your pipeline is doing what you expect it to.

What’s that look like in practice?

- As you’re building pipelines, you can test Processors on the appropriate dataset, enabling quicker iteration – no more “guessing-and-checking.”

- When you’re managing pipelines, you can understand each component within a pipeline – and how it works with one another – at a glance.

- When you’re monitoring pipelines, it’s clear which components are healthy and which are not.

Enable Developer Self-Service

As a byproduct of these points, we also hope to drive developer self-service. No longer do operations teams have to build configs that account for each team and their unique requirements. Instead, developers can go in and build pipelines to their liking.

We’re also enabling self-service by improving discoverability. Your teammates can now understand all the potential functionality and pipeline features when designing a pipeline, versus having to know upfront what configs to build.

(Spoiler alert: Developer self-service is an area we’re going to continue to bolster in the short term. We’re working on new additions that will make it even easier to delegate data streams and monitor team spend. Stay tuned!)

Let’s Take a Deeper Look at What’s New

Route Data From Any Source to Any Destination

Route data to any number of sources to any destination in a matter of clicks. Edge Delta provides over 50 out-of-the-box integrations. So, you can collect data and ship it to its most suitable destination – whether that be an observability platform, SIEM, archive storage, alerting platform, or more.

Easily Manipulate Your Data with Processors

Our Processors allow you to transform, enrich, analyze, and optimize your data without having to create and manage complicated configuration files. As you create a new pipeline, you can apply Processors by selecting from a simple menu. At launch, we support over 15 different Processors, including enrich, transform, mask, filter, log to metric, log to pattern, and route. This makes processing your data upstream a more repeatable and scalable effort as you add more sources to Edge Delta.

Test Processors Before Deploying in Production

As you’re creating and editing Processors, Edge Delta also helps you understand how the data is being changed. Using a sample of your logs, you can view a “before and after” of what you’ve applied in real-time. This allows you to iteratively test and demonstrate how your data is changing as you update configurations. In other words, you don’t have to wait until you deploy into production and are ingesting data to know if your data is being processed the way it should.

How to Get Started

Visual Pipelines is available now within Edge Delta. Try it for yourself.