Kubernetes, which was launched as an open source project in 2014 (and whose roots at Google stretch back to the 2000s), has been around for a while at this point. Businesses of all sizes are transitioning to microservices and a microservice architecture in order to deliver software quickly and reliably. This has made the need for a container orchestration tool like Kubernetes a key part of the tech stack to help keep your distributed systems available, reliable, and secure.

However, this requires a great deal of management of the three pillars of observability (logs, metrics, traces) to maintain. Because of that, kubernetes observability has become a key strategy for any Devops team.

If you’re like the typical IT, DevOps, or site reliability engineer (SRE), you have been working with Kubernetes for some time now. You may even feel confident that you can manage it successfully.

When it comes to observability, however, we’re willing to bet that your Kubernetes strategy is not working as well as it should. Sure, you may be collecting data from your Kubernetes resources (nodes, pods, and so on) and then analyzing it. You may even be correlating some of it by comparing how data from nodes, pods, and other resources interrelates.

But are you able to analyze all of your production data? Are you gaining insights and spotting anomalies as quickly as you need to make them as actionable as possible? Probably not, if you’re settling for a conventional Kubernetes observability strategy.

That’s why it’s time to take Kubernetes observability to the next level. This article explains how to do that by discussing how devops teams commonly approach Kubernetes observability today, pointing out where conventional observability strategies for K8s fall short and identifying actionable tips for getting the very most out of Kubernetes observability.

Whether you’re just starting out on your Kubernetes journey, or you can write YAML manifests in your sleep, this blog will help you plug the gaps in your approach to Kubernetes observability and ensure that you not only collect and analyze observability data, but do so in a way that drives meaningful, real-time insights.

The Kubernetes Observability Journey

To explain why the typical Kubernetes observability strategy falls short, let’s start by discussing the steps that teams tend to follow when they set up a Kubernetes environment and try to figure out how to observe it – a process that you could call the Kubernetes observability journey.

In general, the journey involves three main stages:

- The ignorant stage: At first, teams tend to try to observe Kubernetes using the strategies they apply to non-cloud native environments. They discover soon enough that because Kubernetes involves so many different components, you can’t monitor or observe it in the way you’d monitor or observe a VM, or even a cloud service.

- The multi-faceted stage: That leads teams to start collecting and analyzing data from each Kubernetes component – nodes, pods, services, networks, storage, and so on – separately. They gain the ability to observe Kubernetes holistically. But the problem they run into is that they don’t know how a problem in one component may relate to a problem in another, because they are not correlating their data.

- The correlative stage: So, they start correlating their data. They merge logs and metrics from pods with those from nodes, for example, to try to understand whether a performance issue in a pod was related to a failure in the node. That’s great, but it’s often still not enough on its own to deliver full, actionable, real-time insights.

A majority of teams today that manage Kubernetes are stuck in the third stage of the observability journey. They’re collecting, correlating, and analyzing data from across their clusters. But they’re still finding that pods are failing or performance is degrading, and they don’t always know about the issue soon enough to prevent critical disruptions in production environments.

Why Conventional K8s Observability Falls Short

The question now becomes: why does the typical Kubernetes observability strategy today fall short, even if it involves multi-faceted data collection and correlation? If you’re collecting as much telemetry data as you can, and correlating it in an effort to identify root-cause issues, what more could you possibly be doing to improve upon your observability workflow?

Well, a few things. But we’ll get to the discussion of what to do differently a bit later. For now, let’s observe specifically why conventional Kubernetes observability strategies fall short.

They’re Too Slow

Probably the biggest shortcoming with the way most teams observe Kubernetes is that they simply don’t gain insights quickly enough.

It’s easy enough to see why: when you are collecting large amounts of data from a half-dozen different Kubernetes components, then trying to correlate it all, you spend a lot of time collecting and correlating data before you can actually identify and understand problems. The result is an inability to spot and remediate issues in real time.

This means that the typical team ends up using Kubernetes observability tools and practices to fix problems only after they have occurred. You stare at your dashboards to figure out why your pod crashed after the fact. That’s fine, but it’s not nearly as good as knowing that your pod is having an issue – and being able to fix it – before the crash actually occurs.

They Waste Resources

A second problem that you run into when you try to collect and correlate vast amounts of data from across the various layers of a Kubernetes environment is that you devote so many CPU and memory resources to data collection, processing, and analysis that it can undercut the performance of your actual applications.

This is assuming, of course, that you run your observability tools (Fluentd, Prometheus) directly in your kubernetes clusters (and that your observability tools are not designed to run efficiently in a distributed environment like Kubernetes – which most are not because few observability tools were designed to be cloud-native from the start). The alternative is to run them externally, but that just leads to more delays in collecting and processing data because you have to get it out of Kubernetes before you can do anything with it.

So, teams are stuck with an unhappy choice: either tying up a whole bunch of resources on observability which could be better used to run actual production applications, or moving data out of Kubernetes and waiting even longer for observability insights.

They Cost Too Much

Cost is another major challenge with most Kubernetes observability strategies. Traditional observability tools charge based on the volume of data ingested or indexed in their platforms.

This pricing model may have sufficed years ago, when data volumes were comparatively small. But in a Kubernetes environment, data growth has exploded – and so has cost if you pay based on how much data you ingest or index.

It has become cost-prohibitive for many companies to analyze all of their data using the old model. Businesses end up having to neglect datasets, which undercuts observability.

They’re Siloed

A final key challenge of conventional Kubernetes observability strategies is that they exist on an island of their own. Because Kubernetes is so different from other types of environments, teams end up deploying separate tools and separate workflows just for observing Kubernetes.

As a result, Kubernetes observability becomes disconnected from your broader observability strategy, adding inefficiency and confusion to your workflows.

A Better Approach to Kubernetes Observability

That’s what’s wrong with Kubernetes observability as many teams know it today. The solution is not to rebuild your observability strategy from scratch. Instead, it’s to embrace an edge observability solution that integrates with and complements your existing observability stack.

The key changes to make include:

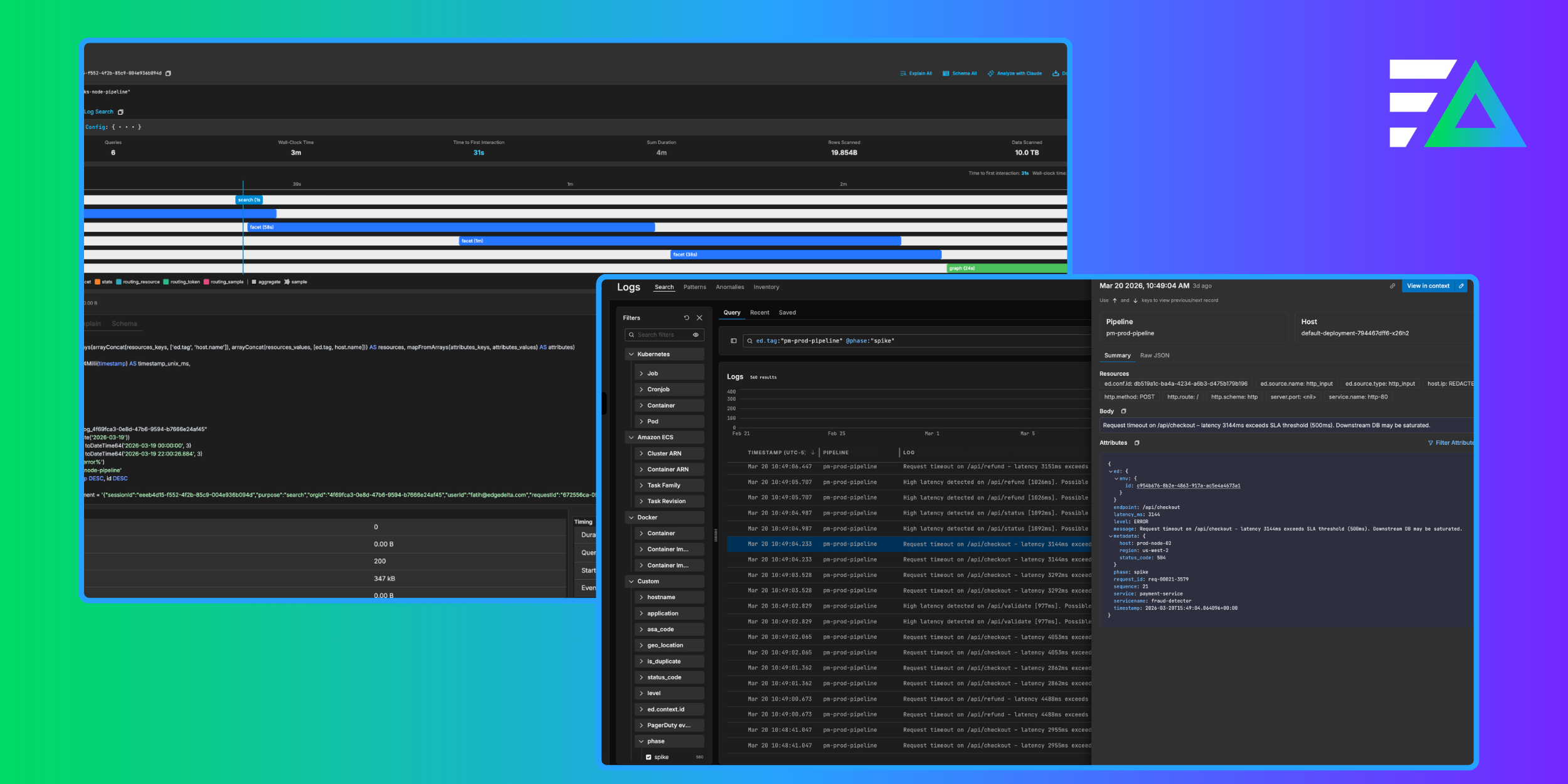

- Analyze data at the source: The sooner you collect and analyze data from Kubernetes’s various components, the faster you can detect and fix problems. This is why you should use distributed stream processing to analyze Kubernetes data at the source rather than waiting to centralize first.

- Use a distributed observability architecture: Along similar lines, make your observability tooling as distributed as the Kubernetes infrastructure itself. Rather than aggregating all of your data into a central location, deploy observability tools across your cluster that can process data at its source. Doing so significantly reduces the CPU and memory resources expended on observability, while also speeding time to insights.

- Correlate data streams, not data sources: Instead of trying to merge or otherwise interrelate source data like logs and metrics to achieve data correlation, correlate data streams. This way, you can analyze data in real time, without a time-consuming data processing process.

- Optimize data storage: Edge observability lets you decouple where data is analyzed and where it’s stored. Logs, metrics, and traces are turned into insights, statistics, and aggregates that stream to your central observability platform – dramatically lowering the costs than you’d pay if you ingested all of the raw data using a conventional observability stack. In parallel, you can transfer the raw data to low-cost storage.

An Efficient Approach to Kubernetes Observability

When you do these things, you reach the fourth stage of the Kubernetes observability journey. If we had to give that stage a name, we’d call it the efficient stage because it makes your observability workflows efficient in multiple ways:

- Resource efficiency: They use CPU and memory resources efficiently.

- Cost efficiency: They minimize costs.

- Time efficiency: They yield insights as quickly as possible.

Conclusion

Today’s teams need to replace conventional Kubernetes observability strategies with approaches that make full use of distributed observability architectures to minimize the waste and deliver faster and cheaper actionability when it comes to Kubernetes performance challenges.